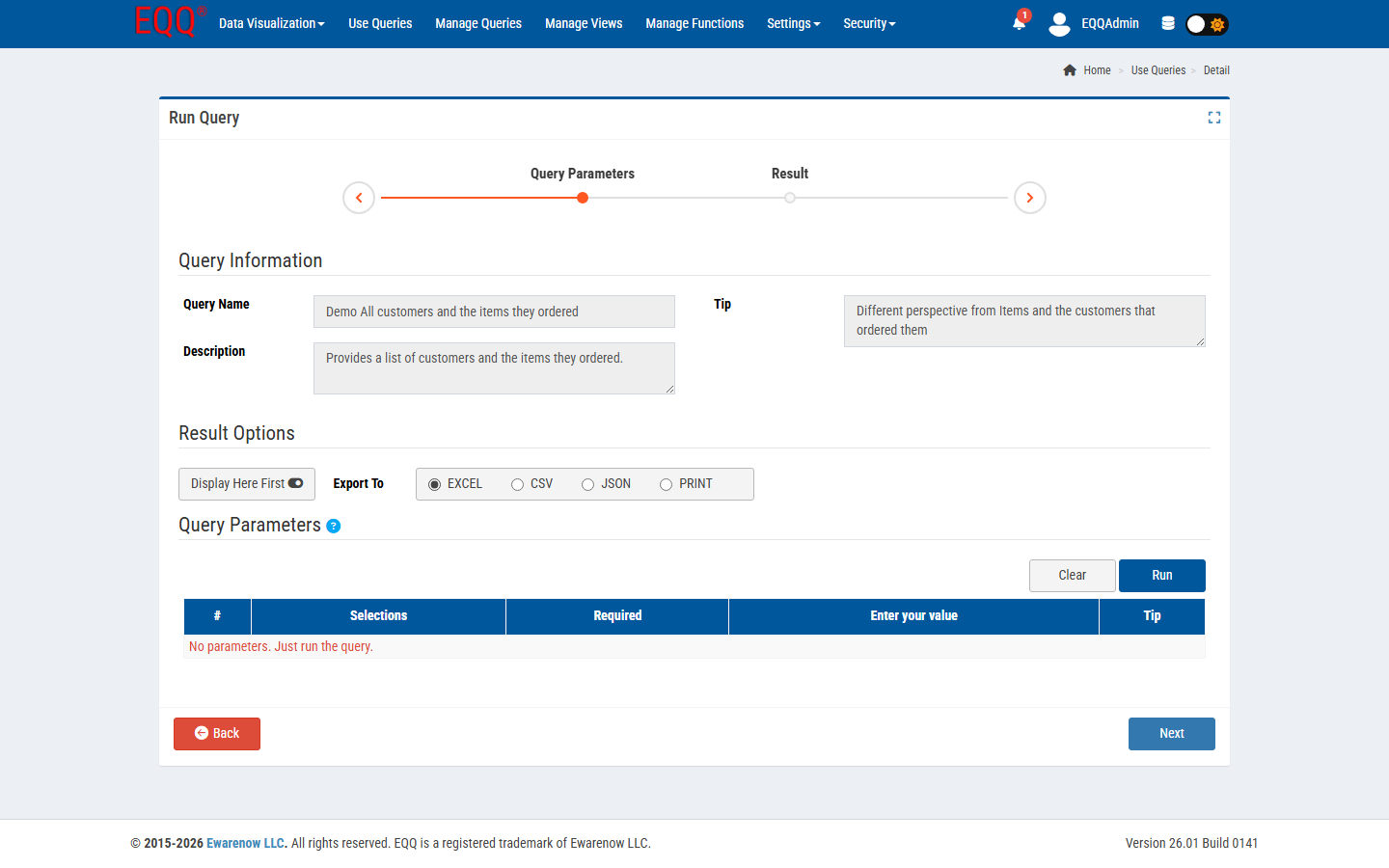

EQQ is built to serve a business-user workload (10k-1M row results, dozens of concurrent users) rather than a data-science workload (billion-row scans). Within that envelope, it scales well. Here is how.

How EQQ handles large result sets automatically

Every query has a Max Rows setting. Below it, EQQ streams rows to the browser grid and offers inline export. Above it, EQQ auto-promotes the run to a background job: the grid closes, the user gets a notification, the result file lands in Background Jobs for download. The system setting LargeQueryResultThreshhold = 5000 defines the row count at which this promotion triggers — queries returning more than 5,000 rows are automatically handled as background jobs.

Excel's 1,048,576-row ceiling and how EQQ works around it

When exporting to .xlsx, EQQ detects the ceiling and splits across sheets automatically. CSV has no ceiling. JSON is streamed, so you can pipe it to any script without materializing in memory.

Session and timeout configuration

- Session token expiry - controlled by

ExpireTime = 180minutes (configurable). This governs how long a session token remains valid. - Background job timeout - separate, configurable setting that lets legitimate long-running exports finish without interruption.

- Clone timeout - separate setting in

Web.configfor cross-database clones.

Concurrency and database-side optimization

The bottleneck at scale is almost always the source database, not EQQ. Add indexes on the columns your parameters filter by; prefer Views over raw table projections; cache slow-changing reference data inside the database itself.

Monitoring query performance with the audit trail

Every execution is audited with elapsed time. Query the audit table for the 90th percentile and you will find your slow queries fast - often 2-3 queries are responsible for 80% of the wait time.